As we navigate the world of trading, a fundamental truth stands out – experience is the best teacher. This axiom, playing an integral role in human learning, holds equal importance in the realm of machines. Imagine an algorithm that learns from experience, adapting and evolving with each encounter comes reinforcement learning.

The idea of crafting a bot for trading has long fascinated researchers and enthusiasts alike. There are diverse methods to train models for effective decision-making in the evolving landscape of real-time market conditions. Entrusting an algorithm to make decisions on your behalf is an important decision that demands thorough research and a wealth of data. EODHD APIs provides a vast and real-time dataset that serves as the backbone for applications in reinforcement learning.

In this article, we will embark on a journey to bring a trading bot to life using the power of machine learning. We’ll explore how the principles of reinforcement learning can be harnessed to create an intelligent trading companion.

Quick jump:

Importing the Necessary Libraries

In this step, we’re getting the tools we need and bringing them into play for the exciting adventure ahead. These packages are quite a diverse bunch, and let’s take a moment to understand why we’ve got each of them on board:

eodhd:

The official Python library of EODHD which provides an easy and programmatic way to access the APIs of EODHD seamlessly.

TensorFlow:

TensorFlow is a machine learning library used for building and training neural networks, making it ideal for tasks like deep learning, natural language processing, and computer vision.

Stable Baselines3:

Stable Baselines3 provides robust implementations of reinforcement learning algorithms in Python. A2C, included in this library, is an algorithm commonly used for solving sequential decision-making problems.

Gym and Gymnasium:

OpenAI Gym is a toolkit for developing and comparing reinforcement learning algorithms, offering a range of environments.

Gym Anytrading:

Gym Anytrading is an extension of OpenAI Gym tailored for reinforcement learning in financial trading environments, facilitating the development and testing of algorithms in the context of algorithmic trading.

Processing Libraries (NumPy, Pandas, Matplotlib):

NumPy enables efficient numerical operations with support for multi-dimensional arrays, while Pandas simplifies data manipulation through its DataFrame structures. Matplotlib is a versatile plotting library for creating visualizations.

import gymnasium as gym

import gym_anytrading

# Stable baselines - rl stuff

from stable_baselines3.common.vec_env import DummyVecEnv

from stable_baselines3 import A2C

# Processing libraries

import numpy as np

import pandas as pd

from matplotlib import pyplot as plt

from eodhd import APIClientAPI Key Activation

It is essential to register the EODHD API key with the package in order to use its functions. If you don’t have an EODHD API key, firstly head over to their website, then finish the registration process to create an EODHD account, and finally navigate to the Dashboard where you will find your secret EODHD API key. It is important to ensure that this secret API key is not revealed to anyone. You can activate the API key by following this code:

# API KEY ACTIVATION

api_key = '<YOUR API KEY>'

client = APIClient(api_key)The code is pretty simple. In the first line, we are storing the secret EODHD API key into the api_key and then in the second line, we are using the APIClient class provided by the eodhd package to activate the API key and stored the response in the client variable.

Note that you need to replace <YOUR API KEY> with your secret EODHD API key. Apart from directly storing the API key with text, there are other ways for better security such as utilizing environmental variables, and so on.

Extracting Historical Data

Before heading into the extraction part, it is first essential to have some background about historical or end-of-day data. In a nutshell, historical data consists of information accumulated over a period of time. It helps in identifying patterns and trends in the data. It also assists in studying market behavior. Now, you can easily extract the historical data of Tesla using the eodhd package by following this code:

# EXTRACTING HISTORICAL DATA

df = client.get_historical_data('TSLA', 'd', "2018-11-26","2023-11-24")

df = df[['open','high','low','close','volume']]

df.columns = ['Open','High','Low','Close','Volume']

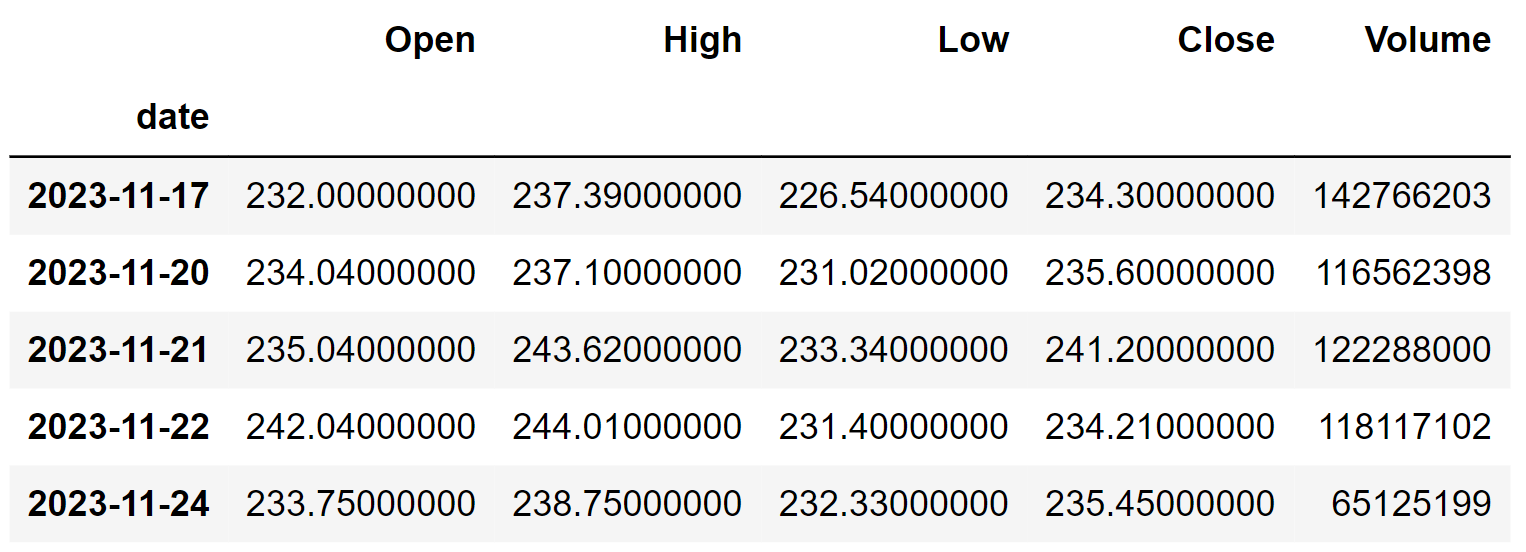

df.tail()The code used here is pretty straightforward. First, we are using the get_historical_data function provided by the eodhd package. This function takes the stock’s symbol, the time interval between the data points, and the starting and ending date of the dataframe. After that, we are performing some data manipulation processes to format and clean the extracted historical data. Here’s the final dataframe:

Using the Trading Gym

The step will create and explore the environment created using Any Trading, a combination of openai gym for trading.

env = gym.make('stocks-v0', df=df, frame_bound=(5, len(df)-1), window_size=5)This action will generate the default environment. Feel free to modify any parameters, like the dataset or frame_bound, to tailor the environment to your specific preferences.

state = env.reset()

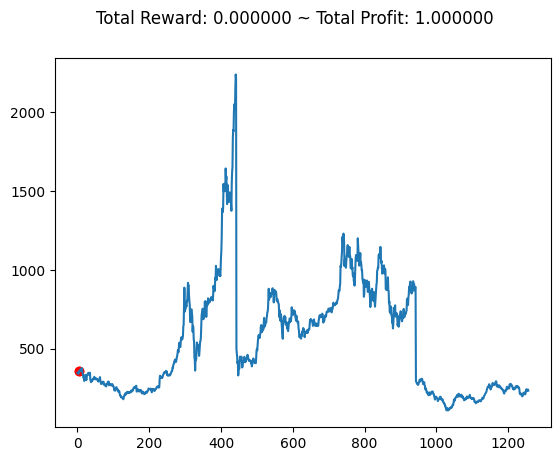

env.render()

The output illustrates how the environment perceives the dataset.

Training and Predicting the Model

The environment created in the previous will go into our model for training and further for real-life use cases in this step.

state = env.reset()

while True:

action = env.action_space.sample()

observation, reward, terminated, truncated, info = env.step(action)

done = terminated or truncated

# env.render()

if done:

print("info:", info)

break

plt.cla()

env.unwrapped.render_all()

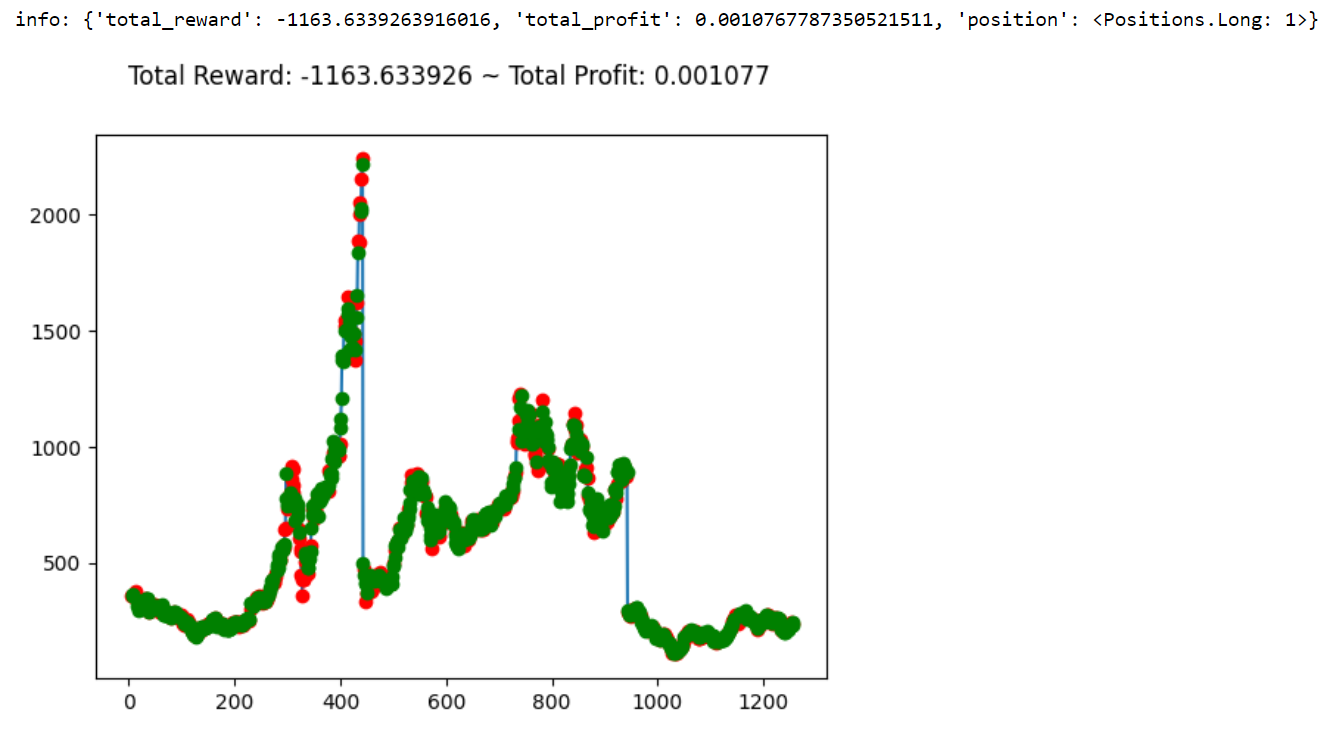

plt.show()The process involves categorizing observations, rewards, termination status, truncation, and additional information from the data, subsequently training the model for each case.

In each iteration, the model makes a random trading decision within the environment, receiving feedback in the form of observations, rewards, and the environment’s status. This loop continues until the trading episode is either terminated or truncated.

Optionally, a visual representation of the trading scenario is rendered, providing a helpful display. After each episode, the relevant information is printed for evaluation. The concluding lines serve to clear the plot, render the complete trading environment, and exhibit the updated visualization. This procedure offers valuable insights into the model’s learning progress and performance within the simulated trading environment.

Short and Long positions are shown in red and green colors.As you see, the starting position of the environment is always 1.

env_maker = lambda: gym.make('stocks-v0', df=df, frame_bound=(5,100), window_size=5)

env = DummyVecEnv([env_maker])This sets up a reinforcement learning environment for stock trading through OpenAI Gym. The lambda function env_maker generates an instance of the ‘stocks-v0’ environment, tailored with a DataFrame (df) for market data and specific time frame parameters. Utilizing the DummyVecEnv class, a vectorized environment is created and assigned to the variable env. Now, this environment is prepared for utilization in the training of reinforcement learning models.

model = A2C('MlpPolicy', env, verbose=1)

model.learn(total_timesteps=1000000)An A2C (Advantage Actor-Critic) reinforcement learning model is implemented using the ‘MlpPolicy’ on the previously configured stock trading environment. The model undergoes training for a total of one million time steps using the learn method.

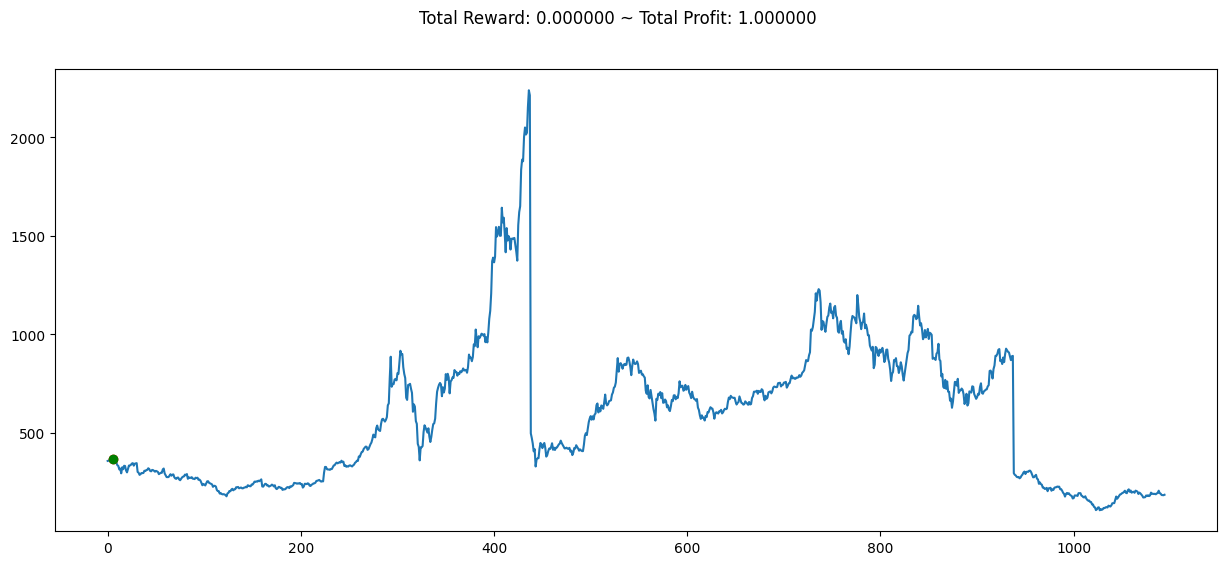

Following this, a new stock trading environment is established, featuring a modified time frame. The trained model then engages with this environment in a loop, making predictions and executing actions. The loop persists until the trading episode concludes, and details about the episode are printed.

Lastly, a plot depicting the entire trading environment is generated and presented for visual assessment. This script serves as a foundational framework for training and assessing a reinforcement learning model tailored for stock trading.

env = gym.make('stocks-v0', df=df, frame_bound=(10,1100), window_size=5)

obs = env.reset()

obs = obs[0]

while True:

obs = obs[np.newaxis, ...]

action, _states = model.predict(obs)

observation, reward, terminated, truncated, info = env.step(action)

if done:

print("info", info)

break

plt.figure(figsize=(15,6))

plt.cla()

env.render_all()

plt.show()

The graph is the predicted outcomes for the given time frame, it can be compared with the graph we obtained in the start of the journey. It provides valuable insights into the model’s decision-making process and reveals the dynamics of the market throughout the specified time frame based on its learnings.

Conclusion

The concept of entrusting decisions to someone based on their experiences and knowledge is undeniably intriguing. The exploration of AI, especially reinforcement learning in finance is a field that holds immense potential and hasn’t been fully tapped. There are extensive possibilities for it to evolve into something much more significant and impactful.